For the last few years, we’ve been mesmerized by the power of Large Language Models (LLMs). They are brilliant conversationalists, poets, and coders. But they have always had a secret, fatal flaw: they are “brains in a jar,” completely disconnected from the real, live, changing world. An AI could write you a perfect email, but it couldn’t send it. It could guess your company’s sales figures, but it couldn’t look them up.

This fundamental gap between knowing and doing has been the single biggest barrier to truly useful AI. To solve this, developers built brittle, one-off custom integrations, a chaotic “Wild West” where every AI model and every software tool spoke a different language.

That era is now ending. Enter the Model Context Protocol (MCP), the new universal standard that is fundamentally changing how AI models think, talk, and act. This isn’t just another small update; it’s the plumbing for the next generation of AI, moving us from passive oracles to active, agentic partners. This is the dawn of true MCP AI.

The “N×M” Problem We Had to Solve

Before MCP, if you wanted your AI chatbot to connect to, say, Salesforce, Google Calendar, and a local file system, you had to build three separate, custom connectors. If you then wanted to swap your AI model (from Anthropic’s Claude to an in-house model, for example), you might have to rebuild all three of those connectors.

This is the “N×M” integration problem: N models trying to connect to M tools, resulting in an exponential, unmanageable mess. This chaos made it impossible to build a reliable, scalable, and secure AI context protocol. The AI’s “context” was limited to the chat window, and it had no persistent, real-world awareness.

What is MCP? A Look at the MCP Framework

The Model Context Protocol is an open standard, first introduced by Anthropic in late 2024, that defines a universal language for AI models to communicate with external tools, data, and services.

Think of it as the “USB-C port for AI.”

Instead of a dozen different proprietary plugs, there is now one standard, open connector. A developer can build one “MCP server” for their application (like Slack, Google Drive, or a company database), and any MCP-compatible AI model can instantly and securely connect to it.

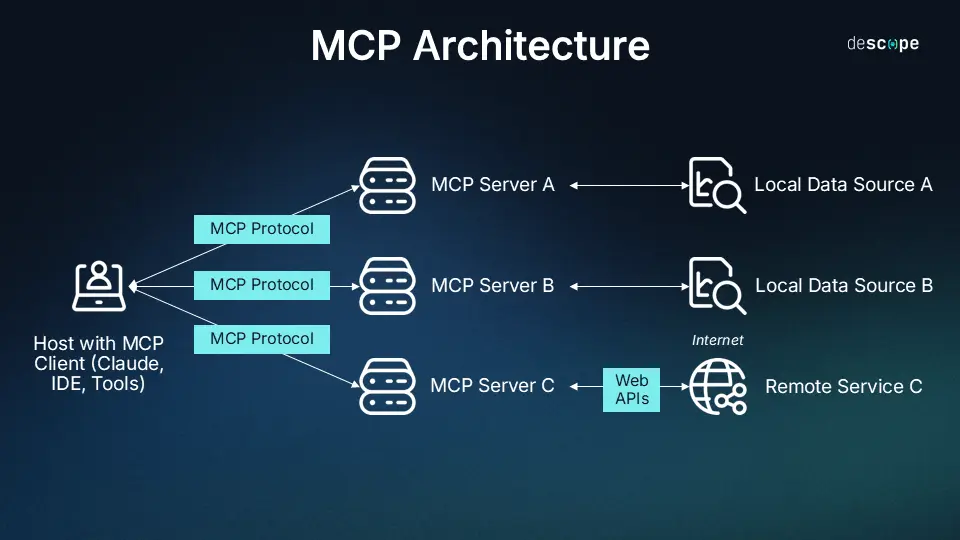

This MCP framework is built on a simple but powerful client-server MCP architecture:

- MCP Host: This is the AI application you interact with (e.g., ChatGPT, a coding assistant, or a custom chatbot).

- MCP Client: This is the component inside the Host that speaks the MCP language. It manages the connections.

- MCP Server: This is the external tool or data source. It’s a lightweight program that “exposes” its capabilities to the AI.

The impact of this standardization cannot be overstated. When the entire industry, from startups to giants, agrees on a standard, it igneites an explosion of innovation. The rapid OpenAI Model Context Protocol adoption, along with that of Google DeepMind, Microsoft, and SAP, signals that this is not a niche tool—it is the new default.

This entire setup, from the AI model to the external tools, creates a single, cohesive model context system where information can flow in two directions.

How It Works: The Language of Model Context Communication

So, how does this “talking” actually happen? The protocol is about more than just a connection; it’s about a conversation.

When an MCP AI model connects to an MCP server, it essentially asks, “What can you do?”

The server responds by describing its capabilities in a way the AI can understand, typically by offering two things:

- Tools: These are actions the AI can execute. For example, a Google Calendar server might offer tools like

create_event(title, time, attendees)orlist_upcoming_events(). - Resources: This is data the AI can read. This could be a static document (like in RAG), a live database, or even a real-time data stream.

This standardized model context communication (built on JSON-RPC 2.0) is the magic. The AI no longer has to guess. It can see the tools it has available, plan a multi-step operation, and then execute those tools to get a real-world result.

This is the core of what makes MCP a true AI model integration protocol: it’s not just about data retrieval, it’s about functional integration.

How MCP Creates a Truly Contextual AI Model

This brings us to the most important shift: the very nature of the AI’s “context.”

Before MCP, “context” was just the chat history. The AI only “knew” what you had just told it. This is why it would forget your name or what you were talking about five messages ago.

With the MCP framework, an AI becomes a truly contextual AI model by gaining access to persistent, real-time, and personal context.

- Real-Time Context:

- Before: “I’m sorry, my knowledge is limited to 2023.”

- After: “I see that your package (via the FedEx MCP server) is 10 minutes away. I’ve also checked the weather (via the Weather MCP server) and it looks like it will start raining, so you should retrieve it quickly.”

- Personal Context:

- Before: “Who is Vasu?”

- After: “Vasu is the contact you emailed last week about your Fiber connection (via the GDrive MCP server). Would you like me to draft a follow-up?”

- Action Context:

- Before: “You can create a new code repository on GitHub by going to…”

- After: “Okay, I have created the new repository ‘My-New-Project’ for you (via the GitHub MCP server) and granted access to your team.”

This new, expanded context solves AI’s hallucination problem. The AI no longer needs to make up facts because it can look them up from a trusted, live source. Its answers become grounded in reality.

The Future: From Talking to Doing

It’s important to clarify that MCP is not the same as Retrieval-Augmented Generation (RAG). RAG is a “read-only” technique for retrieving static documents to inform an answer. RAG is a fantastic—and an MCP server can absolutely use RAG as one of its “Resources.”

But MCP is much bigger. It’s the “read-write-execute” framework for building agents that can act.

The Model Context Protocol is the critical, unglamorous-but-essential layer that will power the next generation of AI. It’s the plumbing that connects the “brain-in-a-jar” to the real world, finally allowing it to see, to remember, and for the first time to do.

Just like MCP enhances how AI understands context, voice search relies on natural, conversational queries. Discover how you can optimize your website for the next generation of searching. Explore: Voice Search SEO